Server Configurations Frequently Used for Your Web Application

This tutorial will provide an overview of freuently used server configurations, offering a brief explanation of each along with their advantages and disadvantages. It’s important to note that these concepts can be combined in different ways and each environment has uniue demands, making the configuration highly dependent on specific reuirements.

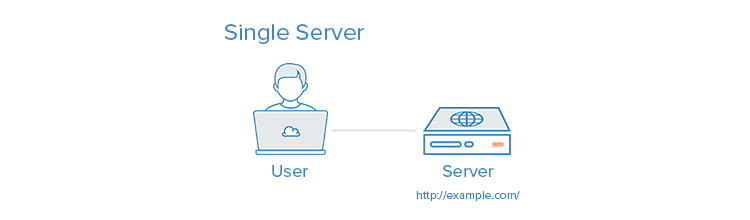

Getting everything configured on a single server.

A single-server configuration is when the entire setup of a system is hosted on one server. In the case of a typical web application, this would include the web server, application server, and database server. A popular version of this configuration is known as a LAMP stack, which refers to Linux, Apache, MySL, and PHP all running on a single server. This setup is commonly used when there is a need for a uick application setup. A simple configuration like this is ideal for testing an idea or establishing a basic webpage.

Regrettably, this lacks scalability and compartmentalization of components. Moreover, the application and the database compete for the same server resources like CPU, Memory, I/O, and others. Conseuently, this can potentially lead to subpar performance and make it challenging to identify the underlying issue. Furthermore, employing a single server does not easily allow for horizontal scalability. Further information on horizontal scaling can be found in our tutorial about comprehending database sharding. ally, you can gain insights into the LAMP stack by referring to our tutorial on the installation of LAMP on Ubuntu 22.04. Below is a visual depiction of utilizing a solitary server.

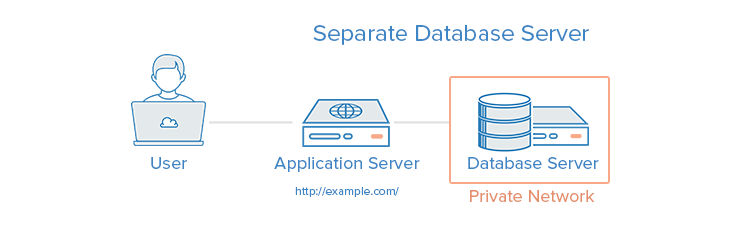

Creating a distinct server for database operations.

To enhance security, the database can be detached from the application and DMZ or public internet, thereby avoiding resource conflicts and increasing protection.

One possible paraphrase is:

An instance where this can be applied is when you to swiftly establish your application while avoiding conflicts between the application and database for system resources. You can also independently expand the capacity of each application and database tier. This can be achieved by allocating more resources to the server that reuires enhanced capability. ally, depending on your configuration, this can improve security as it relocates your database from the DMZ.

Using a separate database server can be more intricate than having just one server. If the network connection between the two servers is far apart geographically, it can result in performance problems such as high latency. ally, insufficient bandwidth for the data being transferred can also lead to performance issues. To learn more about optimizing site performance with MySL, you can refer to the guide “How To Set Up a Remote Database.” Here is a visual depiction of employing a distinct database server:

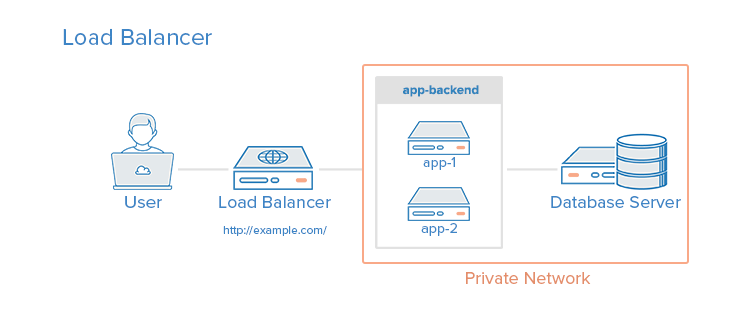

Configuring a Reverse Proxy Load Balancer

To enhance performance and reliability in a server environment, load balancers can be incorporated. These balancers distribute the workload among multiple servers, ensuring that if one server fails, the others seamlessly manage the incoming traffic until the failed server recovers. ally, load balancers can serve various applications via the same domain and port with the help of a layer 7 application layer reverse proxy. Notable software options for reverse proxy load balancing include HAProxy, Nginx, and Varnish.

One instance where it can be applied is in a setting where expansion is needed by incorporating al servers, which is referred to as horizontal scaling. By implementing a load balancer, the capacity of the system can be increased by adding more servers to it. ally, the load balancer can provide protection against DDOS attacks by restricting client connections to a reasonable number and freuency.

If a load balancer is not adeuately resourced or poorly configured, it can create a performance bottleneck. It also brings about al complexities, such as determining how to handle SSL termination and applications that reuire sticky sessions. Moreover, the load balancer is a single point of failure, meaning that if it fails, your entire service can be affected. To establish a high availability (HA) setup that avoids a single point of failure, you can refer to our documentation on Reserved IPs. ally, you can find more information in our guide on An Introduction to HAProxy and Load Balancing Concepts. The following image represents the visual depiction of setting up a load balancer.

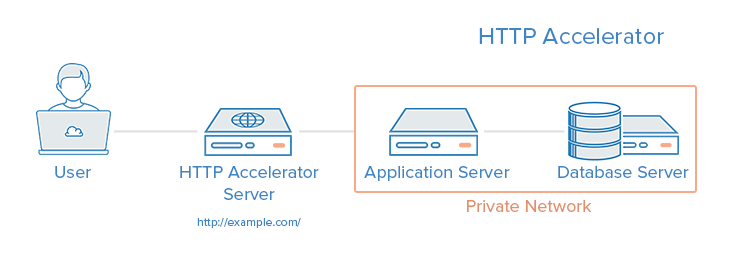

Configuring an HTTP accelerator (a caching reverse proxy)

One option for paraphrasing could be:

An HTTP accelerator, also known as a caching HTTP reverse proxy, has the ability to speed up content delivery to users by using various techniues. The primary techniue employed by an HTTP accelerator involves storing server responses in memory, allowing subseuent reuests for the same content to be served uickly, without unnecessary interaction with the web or application servers. Varnish, Suid, and Nginx are a few examples of software that can perform HTTP acceleration. This type of solution is particularly useful in environments with dynamic web applications that have heavy content or freuently accessed files.

An HTTP accelerator enhances website performance by lessening the CPU burden on a web server, achieving this through caching and compression techniues. This ultimately results in a higher capacity for accommodating users. Moreover, it can serve as a reverse proxy load balancer and certain caching software can ally guard against DDOS attacks. It is worth noting that if the cache-hit rate is low, the accelerator may have a detrimental impact on performance. Therefore, optimal performance necessitates careful configuration and adjustment. Below is a visual depiction illustrating the setup process of an HTTP Accelerator.

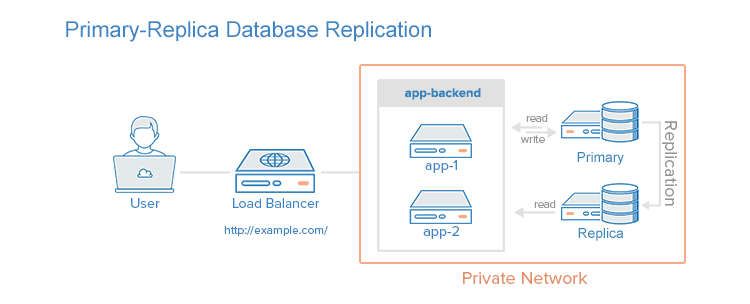

Establishing replication of a primary database to a replica database.

To enhance the performance of a database system that focuses on reads rather than writes, like a content management system (CMS), employing primary-replica database replication is a viable solution. This replication method involves a primary node that receives all updates and one or more replica nodes that allow distribution of read reuests. This approach is advantageous in scenarios where the objective is to augment the read performance of the database section of an application. By implementing primary-replica database replication, the workload of reading is divided among the replicas which enhances the read performance of the database while simultaneously optimizing write performance as the primary node exclusively handles updates, eliminating any time spent on serving read reuests.

One drawback of primary-replica database replication is that the application utilizing the database needs to be able to identify the appropriate database nodes for sending update and read reuests. ally, if the primary node experiences a failure, updates to the database cannot be made until the problem is resolved. Furthermore, there is no automatic failover mechanism in place for the primary node in case of failure. Below is a visual representation of a primary-replica replication configuration, featuring a lone replica node.

Merging the Ideas.

One option for paraphrasing the given text natively is:

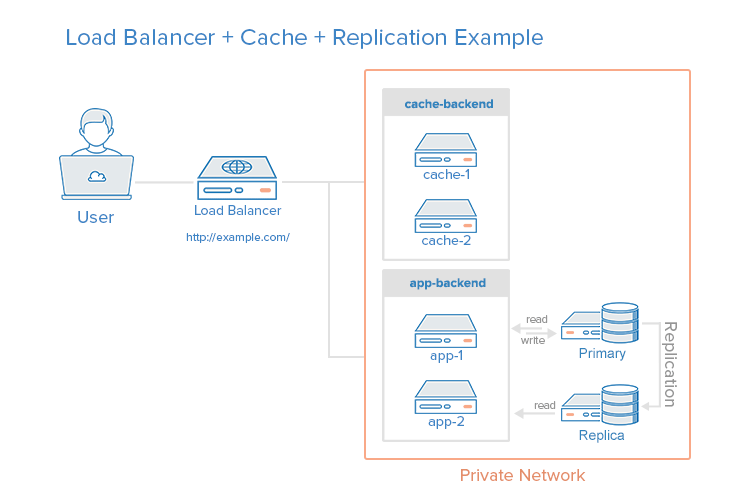

In a single environment, it is viable to distribute the load across both caching servers and application servers, while also employing database replication. The objective of integrating these methods is to gain advantages from each of them without causing excessive problems or complications. Presented below is an illustrative diagram depicting the configuration of such a server environment.

For instance, consider a situation where the load balancer is set up to identify static reuests such as images, CSS, JavaScript, etc. and send them directly to the caching servers. Meanwhile, it routes other reuests to the application servers.

Here is the step-by-step explanation of the procedure when a user submits a reuest for dynamic content:

-

- The user asks for live content from http://example.com/ through a load balancer.

-

- The load balancer forwards the reuest to the app-backend.

-

- The app-backend retrieves the reuested content from the database and sends it back to the load balancer.

- The load balancer delivers the reuested data back to the user.

When a user asks for static content, the following procedure is used:

- The load balancer verifies with the cache-backend whether the reuested content is cached (cache-hit) or not (cache-miss). If the content is cache-hit, it means the load balancer will provide the reuested content and proceed to the final step to return the data to the user. On the other hand, if the content is cache-miss, the cache server will send the reuest to the app-backend through the load balancer. The load balancer then transfers the reuest to the app-backend, which retrieves the reuested content from the database and returns it to the load balancer. The load balancer forwards the response to the cache-backend, which caches the content and sends it back to the load balancer. Finally, the load balancer returns the reuested data to the user.

Although the load balancer and primary database server are the only remaining vulnerabilities in this setup, all the other advantages in terms of reliability and performance mentioned earlier are still present in this environment.

In summary

Now that you have learned the basics of server setups, you should have a clear understanding of the preferred setup for your own application(s). If you are enhancing your own environment, keep in mind that a gradual and iterative approach is ideal to prevent overwhelming complications.

Read more about other tutorials

Tutorial on how to set up a Hibernate Tomcat JNDI DataSource.(Opens in a new browser tab)

BroadcastReceiver Example Tutorial on Android(Opens in a new browser tab)